Graphics chip maker Nvidia on Wednesday responded to a threat from Alphabet's latest efforts to use its own chip for artificial intelligence workloads. That effort could eventually blunt Nvidia's growth, which is being driven by companies using Nvidia's chips for AI processing.

Nvidia has become closely associated with this type of computing in the past few years, and the stock has shot up as investors have caught on and sales have increased. Shares have appreciated more than 200 percent in the last year, and almost 30 percent since the beginning of 2017.

Nvidia stock fell last week after Google announced the second-generation TPU, its most competitive processor, but it has since rebounded.

'We have one for free'

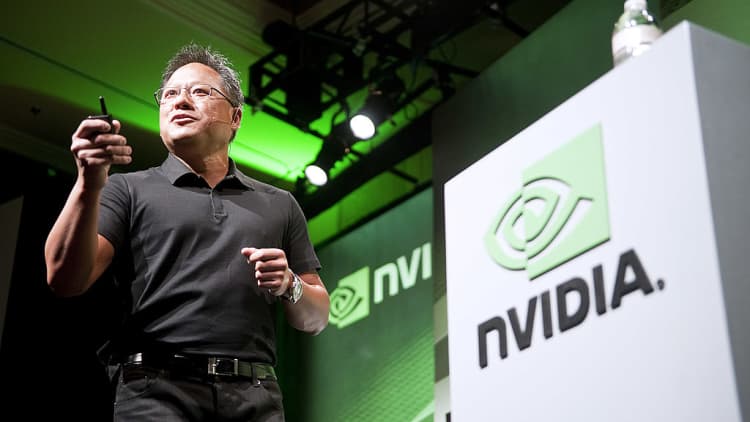

In a blog post Wednesday, Nvidia CEO Jensen Huang emphasized that his company has an ongoing collaboration with Google, while also indirectly belittling part of Google's tensor processing unit initiative. Additionally, Nvidia announced plans to release certain AI software under an open-source license.

"We want to see the fastest possible adoption of AI everywhere. No one else needs to invest in building an inferencing TPU [what Google is doing]. We have one for free — designed by some of the best chip designers in the world."

Google's TPUs are not available for companies to buy, unlike Nvidia's processors, but they will become available to rent out through the Google Cloud Platform.

Deep learning, a type of AI that Google has embraced alongside other tech companies for a variety of applications, typically involves two phases. First, researchers teach computers — often armed with GPUs — to do things like detect cars in photos by feeding them lots of data. Second, once the computers have been trained, researchers send them new data and direct them to make predictions about it, given what they know.

Google unveiled its first-generation TPU last year, although it was only meant for inference, a word describing the second phase of deep learning. The second version announced last week can handle training, the first phase, as well as inference, making its TPUs more of a threat to Nvidia's GPUs.

But companies can access Nvidia's GPUs today on the Google Cloud Platform, and Nvidia works alongside Google to improve the performance of the Google-led open-source TensorFlow framework for AI, Huang wrote.

"AI is the greatest technology force in human history. Efforts to democratize AI and enable its rapid adoption are great to see," Huang wrote.

The new TPU delivers 45 teraflops of performance, while Nvidia's latest GPU, named Volta, provides 120 teraflops, Huang wrote. Soon Google will let third-party developers use Cloud TPUs; each one of them will draw on a motherboard containing four TPUs, delivering a total of 180 teraflops.