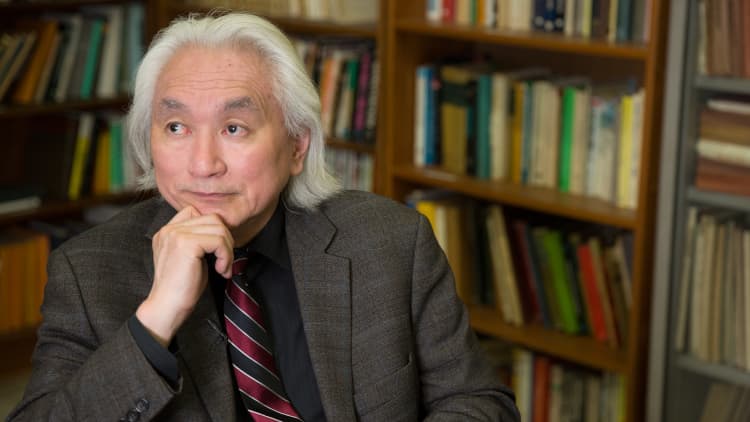

The moment that humanity is forced to take the threat of artificial intelligence seriously might be fast approaching, according to futurist and theoretical physicist Michio Kaku.

In an interview with CNBC's "The Future of Us," Kaku drew concern from the earlier-than-expected victory Google's deep learning machine notched this past March, in which it was able to beat a human master of the ancient board game Go. Unlike chess, which features far fewer possible moves, Go allows for more moves than there are atoms in the universe, and thus cannot be mastered by the brute force of computer simulation.

"This machine had to have something different, because you can't calculate every known atom in the universe — it has learning capabilities," Kaku said. "That's what's novel about this machine, it learns a little bit, but still it has no self awareness ... so we have a long way to go."

But that self awareness might not be far off, according to tech minds like Elon Musk and Stephen Hawking, who have warned it should be avoided for the sake of future human survival.

And while Kaku agreed that accelerating advances in artificial intelligence could present a dilemma for humanity, he was hesitant to predict such a problem would evolve in his lifetime. "By the end of the century this becomes a serious question, but I think it's way too early to run for the hills," he said.

"I think the 'Terminator' idea is a reasonable one — that is that one day the internet becomes self-aware and simply says that humans are in the way," he said. "After all, if you meet an ant hill and you're making a 10-lane super highway, you just pave over the ants. It's not that you don't like the ants, it's not that you hate ants, they are just in the way."