said on Wednesday that it was reviewing its website after a British television report said the online retail giant’s algorithms were automatically suggesting bomb-making ingredients that were “Frequently bought together.”

The news is particularly timely in Britain, where the authorities are investigating a terrorist attack last week on London’s Underground subway system. The attack involved a crude explosive in a bucket inside a plastic bag, and detonated on a train during the morning rush.

The news report is the latest example of a technology company drawing criticism for an apparently faulty algorithm. and Facebook have come under fire for allowing advertisers to direct ads to users who searched for, or expressed interest in, racist sentiments and hate speech. Growing awareness of these automated systems has been accompanied by calls for tech firms to take more responsibility for the contents on their sites.

Amazon customers buying products that were innocent enough on their own, like cooking ingredients, received “Frequently bought together” prompts for other items that would help them produce explosives, according to the Channel 4 News.

Although many of the ingredients mentioned by Channel 4 News are not illegal on their own, the report said there had been successful prosecutions in Britain against individuals who bought chemicals and components that can produce explosives.

Amazon said in a statement that all the products sold on its website “must adhere to our selling guidelines and we only sell products that comply with U.K. laws.”

“In light of recent events, we are reviewing our website to ensure that all these products are presented in an appropriate manner,” the statement continued. “We also continue to work closely with police and law enforcement agencies when circumstances arise where we can assist their investigations.”

The company declined to comment further.

Other tech companies have been in the spotlight for the algorithms that fuel their websites.

ProPublica, a nonprofit news outlet, revealed last week that Facebook had enabled advertisers to seek out self-described “Jew haters” and other anti-Semitic topics. In response, Facebook said it would restrict how advertisers targeted their audiences.

Buzzfeed also reported on how Google allowed the sale of ads tied to racist and bigoted keywords, automatically suggesting more offensive terms. Google said it would work harder to halt the offensive ads.

Facebook has also come under scrutiny for potentially being used to influence people during the American presidential election last year. It disclosed this month that fake accounts based in Russia had purchased more than $100,000 worth of ads on divisive issues in the lead up to the vote.

Last week’s attack at Parsons Green train station was the fifth terrorist attack in Britain in a matter of months. Several people have been arrested in the investigation of the explosion, in which dozens of people were wounded.

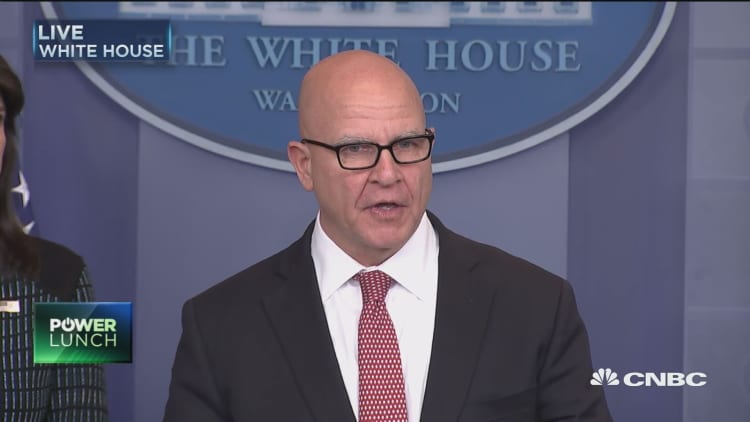

WATCH: McMaster: United States remains committed to defeating terrorist organizations